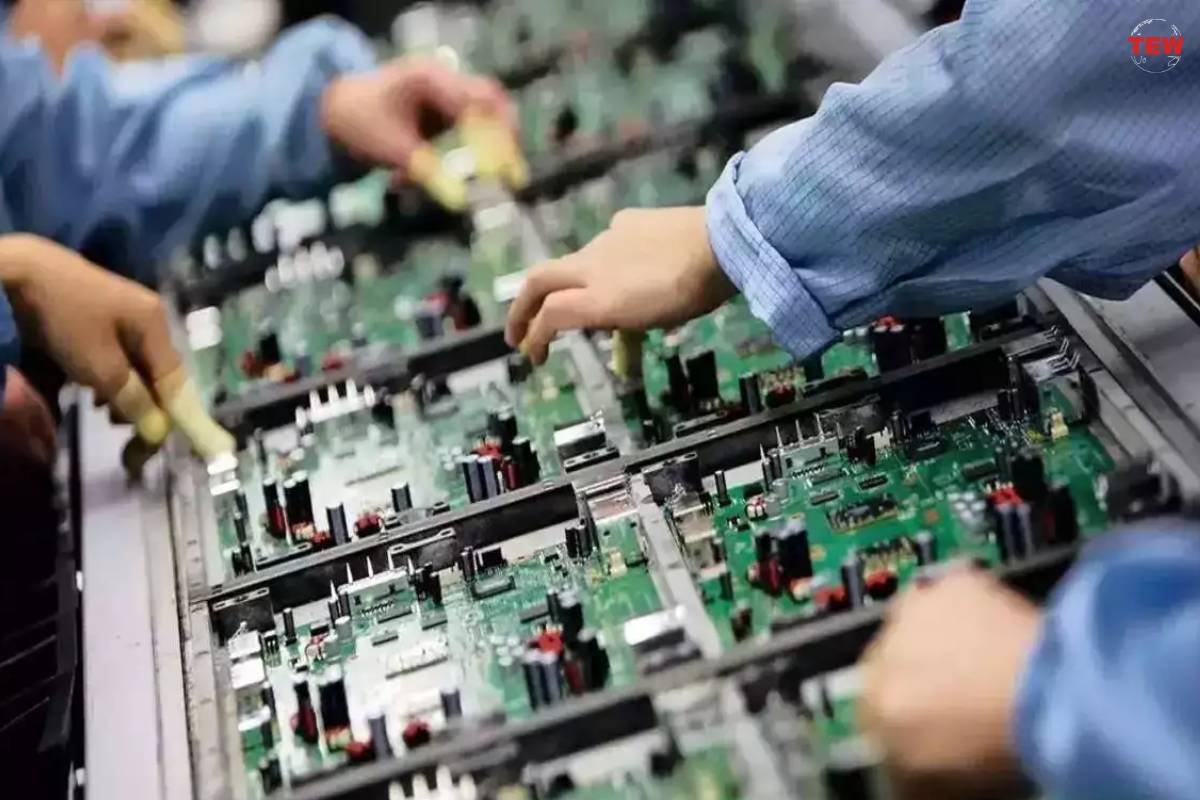

Microsoft is introducing its inaugural artificial intelligence chip and an Arm-based chip for general-purpose computing tasks, both slated for deployment on Microsoft’s Azure cloud, as announced at the Ignite conference in Seattle. Amazon Web Services Graviton Arm chip, launched five years ago, has gained widespread acceptance. Microsoft unveiled two chips at its recent Ignite conference in Seattle.

The first chip, named Maia 100, is an artificial intelligence chip that could potentially rival Nvidia’s highly sought-after AI graphics processing units. The second, a Cobalt 100 Arm chip, is designed for general computing tasks and could compete with Intel processors.

Diverse cloud infrastructure options for artificial intelligence chip

Cash-rich tech companies are increasingly offering clients more options for cloud infrastructure to run applications. Microsoft, with around $144 billion in cash as of October, held a 21.5% share of the cloud market in 2022, second only to Amazon.

Virtual-machine instances powered by the Cobalt chips are set to be commercially available through Microsoft’s Azure cloud in 2024, according to Rani Borkar, a corporate vice president. However, a timeline for the release of the Maia 100 still needs to be provided.

Other major tech players, such as Alibaba, Amazon, and Google, have long been providing diverse cloud infrastructure options. Unlike Nvidia or AMD, Microsoft and its cloud computing peers do not plan to allow companies to purchase servers containing their chips.

Microsoft developed its AI computing chip, Maia 100, based on customer feedback. The company is testing its performance in various applications, including the Bing search engine’s AI chatbot (now called Copilot), the GitHub Copilot coding assistant, and the GPT-3.5-Turbo language model from Microsoft-backed OpenAI.

In addition to the Maia chip, Microsoft has created custom liquid-cooled hardware called Sidekicks, which can be installed in racks alongside Maia servers without the need for retrofitting.

While Maia’s adoption is still uncertain, Cobalt processors might see faster adoption based on Amazon’s experience. Microsoft is already testing its Teams app and Azure SQL Database service on Cobalt, with a reported 40% better performance compared to Azure’s existing Arm-based chips.

40% price-performance improvement

As companies seek ways to make their cloud spending more efficient, AWS customers have succeeded with Graviton, with the top 100 customers experiencing a 40% price-performance improvement. Transitioning from GPUs to AWS Trainium artificial intelligence chip may be more intricate, but similar price-performance gains are anticipated over time.

Microsoft plans to share specifications for its chips with the ecosystem and partners to benefit all Azure customers. Details on Maia’s performance compared to alternatives like Nvidia’s H100 were not provided, but Nvidia announced the shipping of its H200 in the second quarter of 2024 on Monday.